The first day of lockdown meetings nearly broke me. Back-to-back video conference through the afternoon; by 4 PM, and not even a full day in, I closed the laptop genuinely unsure how anyone was supposed to do that job for another week. Two or three weeks later it was just Tuesday. When offices reopened and I spent a full day walking between conference rooms and talking to humans in three dimensions, the way I had done for fifteen years before the pandemic, I came home exhausted in exactly the same way and wondered, again, how anyone was supposed to do that job. A few weeks later, again, it was just Tuesday.

What sticks with me about that loop, beyond the obvious thing about resilience, is how completely the post-adaptation state erases the pre-adaptation state. I cannot really remember what it felt like to find video meetings exhausting; I know I did, I have written evidence, but the feeling is gone. The wall between the two modes is opaque from both sides. You cannot see across it until you are over it, and once you are over it you cannot quite remember what was on the other side.

I have been thinking about that wall in the context of how we are currently building products on top of LLMs, because most of the smart product work happening right now is, I think, work on a wall.

LLMs are stochastic in a way that nothing in mainstream software has been before. Same prompt, different output; same model, different output; sometimes same seed and the floating-point gods still go their own way. We have known this since the Midjourney and ChatGPT moments, both of which somehow now feel like ancient history (a recurring property of this field is that two years ago is the Pleistocene). And yet almost every piece of software the modern knowledge worker uses has spent thirty years promising determinism so thoroughly that the promise stopped being a feature and became a substrate. Move the slider, the pixels move. Hit Cmd-S, the file saves. Call the function, the function runs. Rendering is a quiet exception, but the user only ever sees the converged, de-noised frame.

The product question of the last two years, and the one most of us are still working on, is how to bolt a stochastic engine onto that deterministic substrate without breaking the user. The good answers, the ones that are working, all turn out to involve writing a new contract with the user inside a carefully walled-off part of the product. Lightroom’s generative remove is the example I keep reaching for, less because it is close to my work and more because it is close to my weekends. It is, mechanically, the most non-deterministic thing the product has ever done, but Adobe walled it off cleanly: distinct mode, distinct button, three variations by default, retry until you are happy. The rest of Lightroom is exactly as deterministic as it was in 2008. The contract on the exposure slider is unchanged; the contract on the generative button is new and legible, and the user, encountering it, adapts in about four seconds because the boundary is visible.

I had a version of this conversation with a colleague this week. We are looking at an LLM-powered feature landing at around ninety percent accuracy on the task we care about, and his concern (which I think is real and serious, not curmudgeonly) was that ninety percent will quietly cost us developer trust. Developers are calibrated for a world where the compiler either accepts the program or it does not; a tool wrong one time in ten will, the argument goes, be distrusted after the second miss and abandoned after the third. My response, which I am still mostly sure about, is that ninety percent is fine if and only if the contract is explicit, and that the work is to build a UX where the new contract is as legible as the old one. We will find out in a few weeks.

But the more the conversation stays with me, the more I think we are both solving a transitional problem, and the more I wonder what the same problem looks like once the transition is over.

Because the engineer my colleague is worried about is a particular engineer, with a particular formation. Most of us who ended up in this profession have some version of the same origin story; mine is a triangle on a screen, seventeen years old, and the joy was equal parts the triangle itself and the reliability of it. I had typed an incantation, the machine had obeyed, and the machine would obey again the same way every time I asked. Heisenbugs are part of the folk mythology of the field precisely because they violate that contract; we named them, the way pre-modern cultures named comets, because they were the rare exception to a world that otherwise behaved. The entire emotional substrate of the profession, the thing that makes a certain kind of person stay up until three AM debugging instead of going to sleep, is built on the premise that the system, in principle, behaves.

The engineer we are designing for today is that engineer. The contract we are carefully writing for the ‘ninety percent feature’ is a contract that engineer can read. The wall we are putting around the stochastic part of the product is a wall that engineer needs.

What I do not know, and what I find myself returning to, is what happens to the craft when the next engineer does not need the wall. The eleven-year-old getting into programming this year is not having the triangle moment. They are having some other moment, and I think it is something more like the first time they asked a model to build a small game and it mostly did, and they fixed the parts that were wrong, and the loop of negotiation, not the loop of obedience, is what hooked them. That is a different formation. The dopamine source is different; the substrate of expectations is different; the relationship to the machine is different in a way that the word “different” probably understates.

You can sketch out, without much difficulty, some of the second-order effects once that engineer is the median engineer. The unit of work stops being the function and starts being the intent. Code review stops being a quality gate at the end and becomes most of the job. Reproducibility becomes a thing you opt into for specific surfaces (releases, debugging, regulated systems) rather than the default everywhere. Testing shifts from asserting outcomes to asserting distributions. The senior engineer becomes less the person who can build the system and more the person who can sense where the system is quietly bluffing, which is a kind of expertise the field does not yet really know how to teach and a sentence that will, accurately, annoy a lot of senior engineers.

The Lightroom equivalent is even further out and even more interesting to think about. The wall Adobe so carefully built between the deterministic sliders and the stochastic generative tools is a wall built for me, the photographer trained on twenty years of deterministic Lightroom. The photographer who learns the craft on a stochastic-first tool may not need the sliders at all, or may keep them around the way we kept the floppy-disk save icon for thirty years after floppies died, as a vestigial pointer to a contract that no longer underlies the thing. The interface to the photograph might just be intent, expressed in language and gesture, with the file as a downstream artifact rather than the unit of work. The skill that matters in that world is not knowledge of the tool; it is taste, the ability to know which of the candidates is the one. Taste replaces correctness as the limiting reagent of the craft. That is also a sentence that will accurately annoy a lot of craftspeople, and probably should. I think it even annoys me!

None of this is a prediction so much as a question I cannot stop turning over. The products we are shipping right now, the ones doing the careful work of writing legible contracts and walling off the stochastic parts, are unambiguously the right products for the user we currently have. They are also, almost certainly, transitional products, in roughly the way that the early web was a transitional medium for documents that wanted to be interactive experiences but were still written as if they were articles in a magazine. The transitional version is necessary; the transitional version is also not the end state, and the end state is opaque from this side of the wall in exactly the way the post-COVID office was opaque from the pre-COVID office.

The thing I keep coming back to is that we will not, I think, remember the wall once we are past it. The engineer who grew up negotiating with stochastic tools will find it as quaint that we ever expected single-shot correctness; as we find it quaint that anyone ever expected to know a phone number by heart. The photographer who grew up describing intent will find the slider as quaint as we find the darkroom timer. The transition will, from the other side, look like it took about three weeks, and the things we are working so hard to preserve will be invisibly gone, and the things we cannot yet imagine will just be Tuesday.

The interesting question for anyone building one of these products today is not how to write the right contract for today’s user, although that work is real and necessary. The interesting question is what the product looks like when the contract no longer needs to be written down, because the user no longer remembers a world in which it could have been any other way.

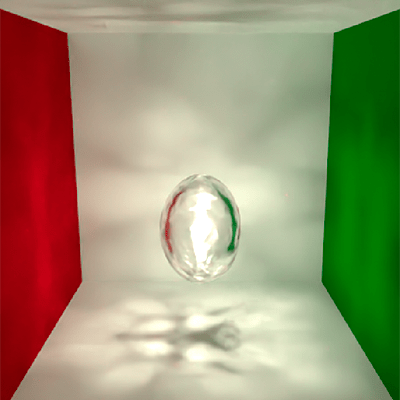

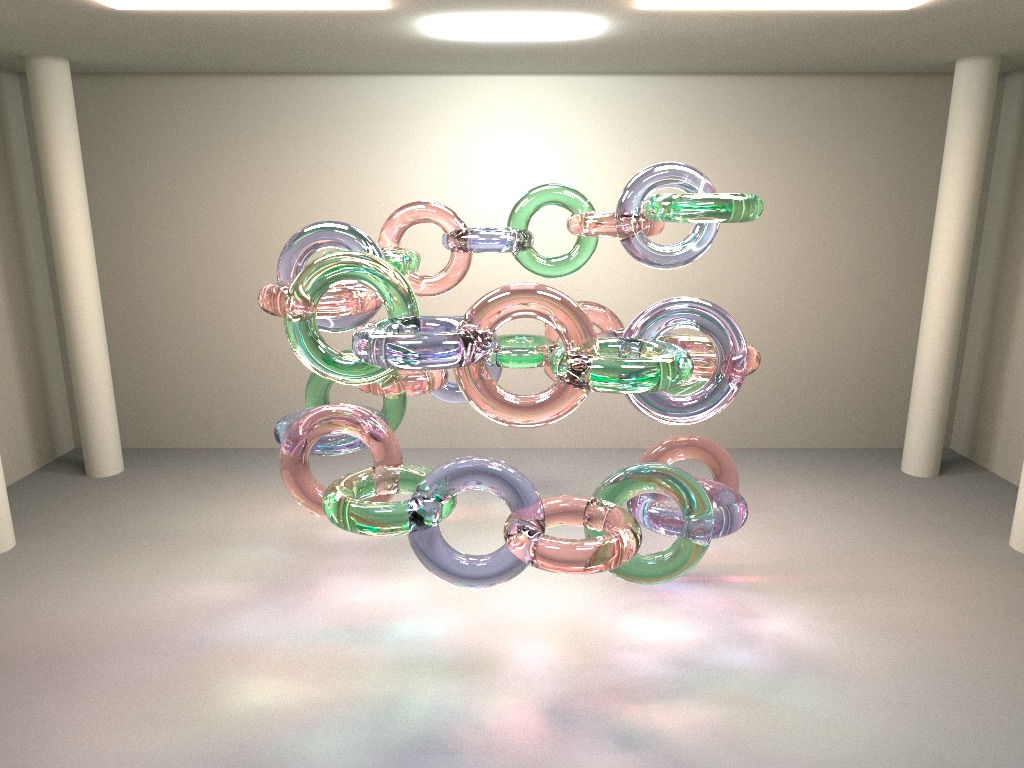

Postscript on the image. The render is the Sponza atrium lit by ten thousand individual candle flames, output from my hobby renderer. Sponza has been the graphics industry’s standard stress test scene for two decades; ten thousand small emitters in it is a stress test of the stress test, almost entirely of the light-sampling code, since no renderer can afford to evaluate every candle from every shading point and instead has to learn which ones matter. I had implemented light BVH and path guiding and spent the week getting all the glTF importing just right.